Configuring Keebo Warehouse Optimization for Databricks

Warehouse Optimization for Databricks is currently in preview. Reach out to Keebo support for access and onboarding.

Connecting Warehouse Optimization to Databricks is a two-phase process. Phase 1 must be completed by a Databricks admin before Phase 2 can begin in the Keebo portal.

Phase 1 — Databricks Environment Configuration

This phase must be completed by a Databricks admin before starting onboarding in the Keebo portal. The admin must have Account Admin and Metastore Admin permissions on the workspaces being connected.

As you complete Phase 1, use the Values Reference table below to record the credentials and resource identifiers you will need in Phase 2.

Values Reference

Collect these values during Phase 1 and keep them available for the Keebo onboarding wizard in Phase 2.

| Group | Field | Description | Example |

|---|---|---|---|

| Account | Account ID | From the Databricks Account Console URL, after account_id= | ab45cdef-123a-4b5c-67de-89012ab345c6 |

| Service Principal | Client ID | Displayed on the service principal detail page in the Account Console | 18d2ad6f-80e7-4662-9313-22bd73f783a2 |

| Service Principal | Client Secret | Generated when clicking Generate Secret — copy immediately, cannot be viewed again | dose2cdb6ac847e9643d6d63225a7a92159e |

| Workspace(s) | Workspace URL (one per workspace) | Address bar URL when logged into the Databricks workspace | https://xyz.cloud.databricks.com |

| Warehouse(s) | Warehouse ID (one per warehouse) | Displayed next to the warehouse name in SQL Warehouses | abc1234567890ef |

| Catalog / Volume | Catalog name | Catalog created for Keebo usage views and export volume | keebo (default) |

| Catalog / Volume | Export volume path | Fixed Unity Catalog path for batch workload export | keebo.kwo.export |

How Is the Keebo Service Principal Created?

Keebo uses OAuth M2M authentication to integrate with Databricks workspaces. Follow these steps to set up an OAuth2 service principal in the Databricks Account Console.

These instructions assume a single Keebo service principal is used to authenticate with all Databricks workspaces. Contact Keebo support for assistance with multiple service principals per account.

-

Log in to the Databricks Account Console:

- AWS: https://accounts.cloud.databricks.com

- Azure: https://accounts.azuredatabricks.net

- GCP: https://accounts.gcp.databricks.com

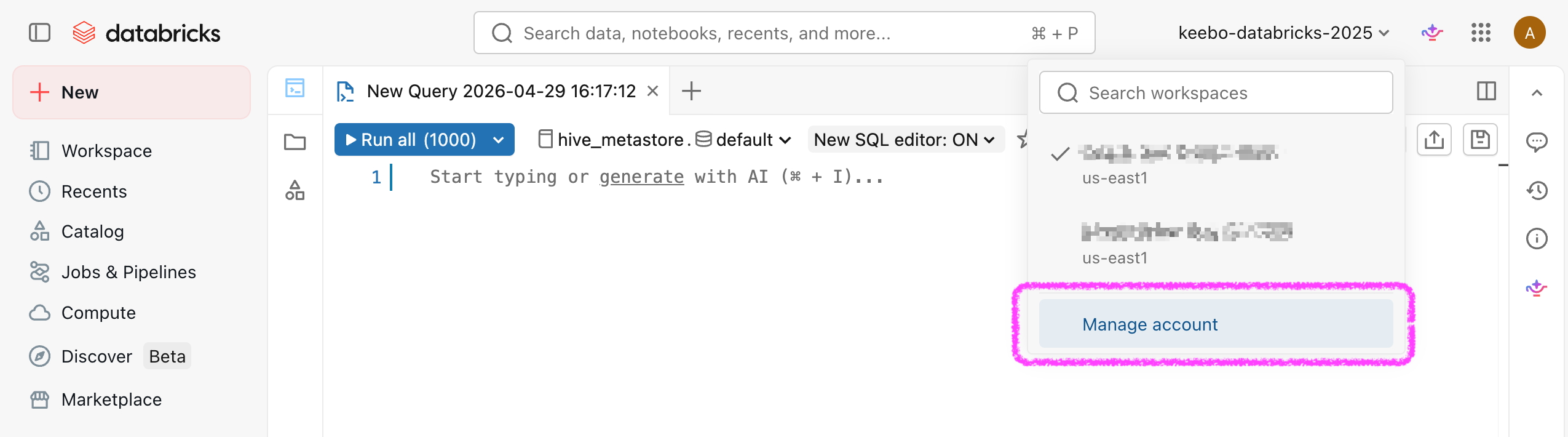

You can also navigate to the Databricks Account Console from the Databricks Workspace UI

Note the Account ID from the URL (the value after

account_id=) and save it to the Values Reference table. -

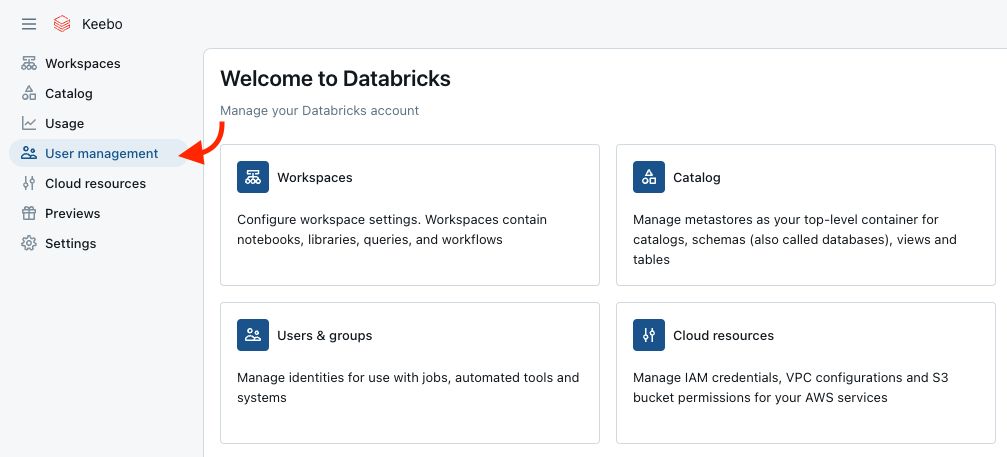

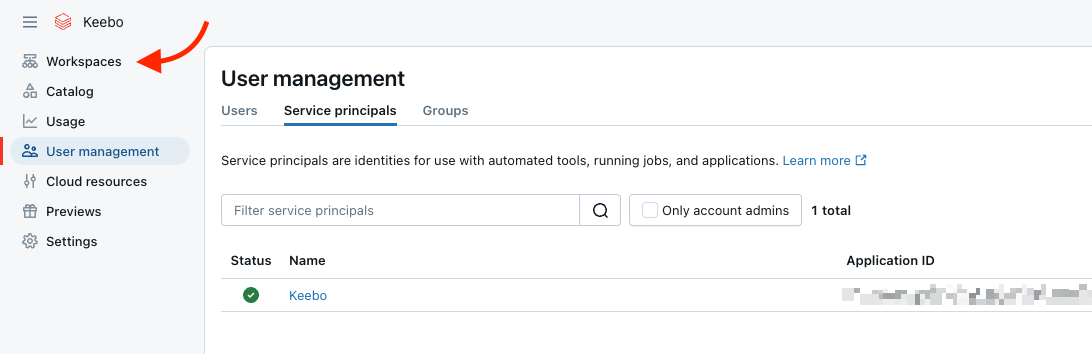

In the left navigation, select User Management.

-

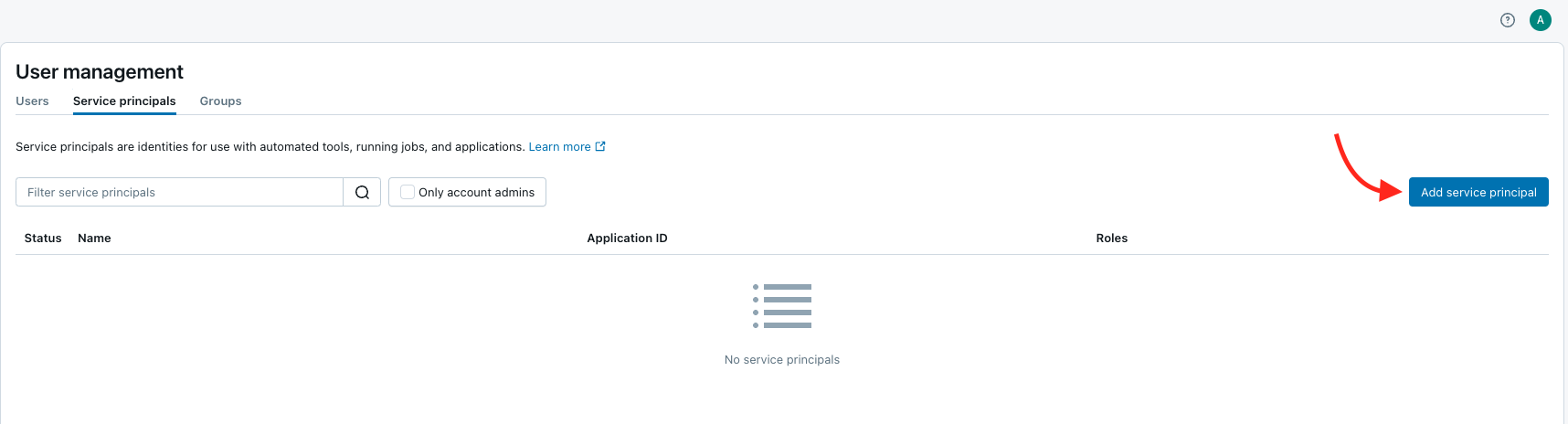

Open the Service Principals tab and click Add service principal.

-

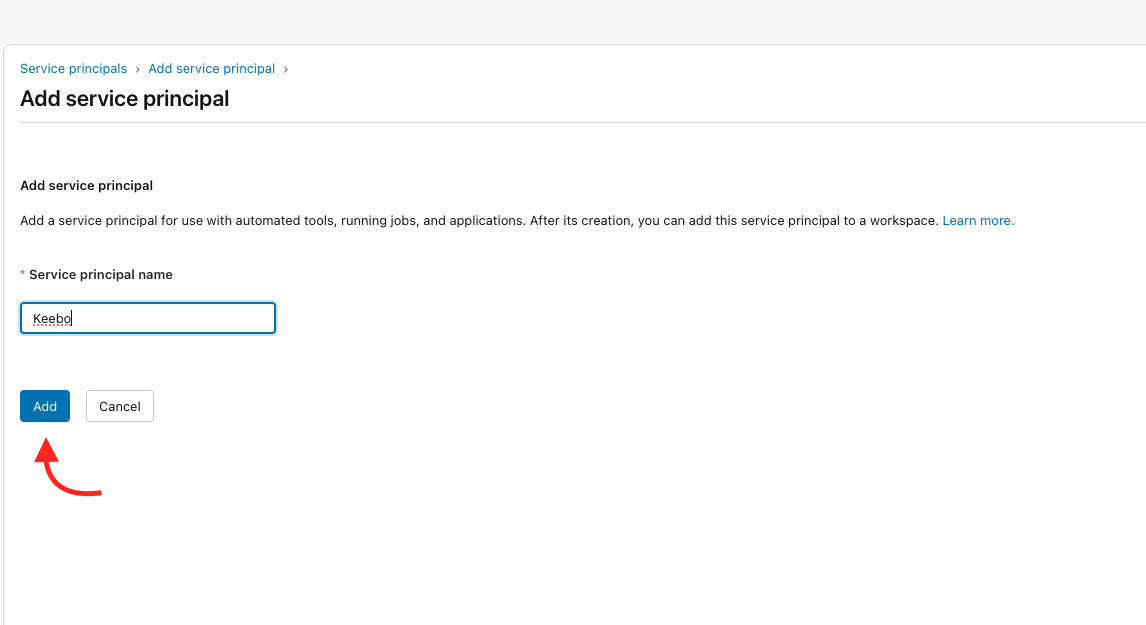

(Azure only) Select "Databricks managed" under the "Management" section.

-

Enter a name for the service principal (recommended: "Keebo") and click Add.

-

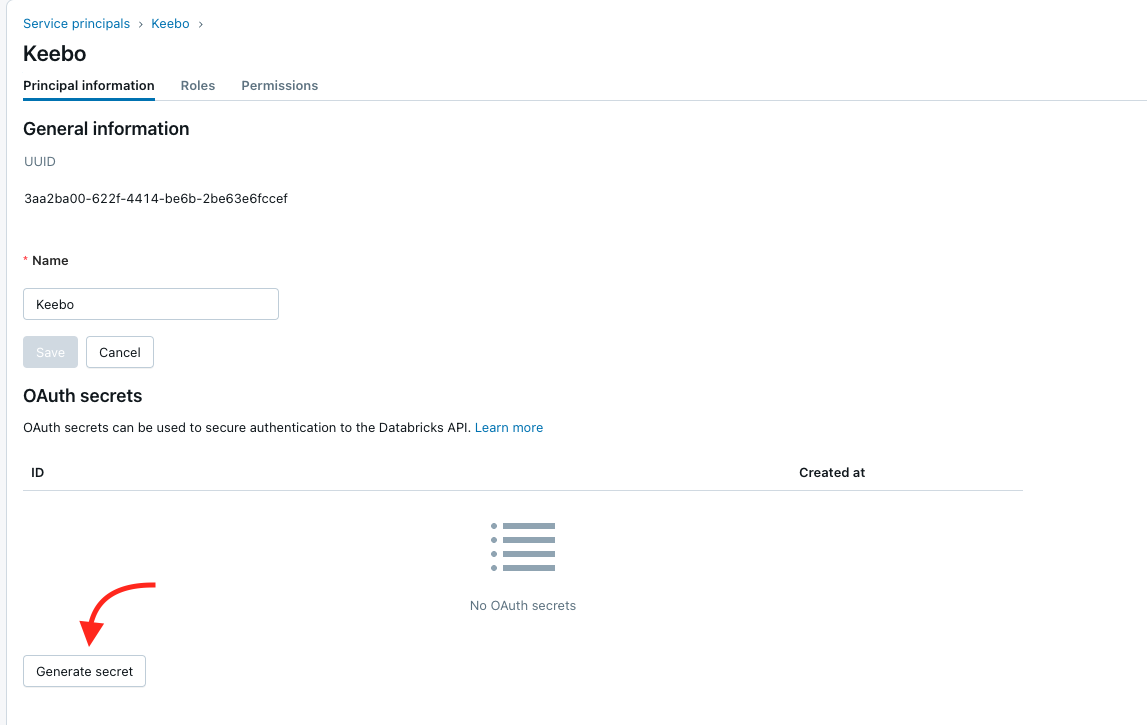

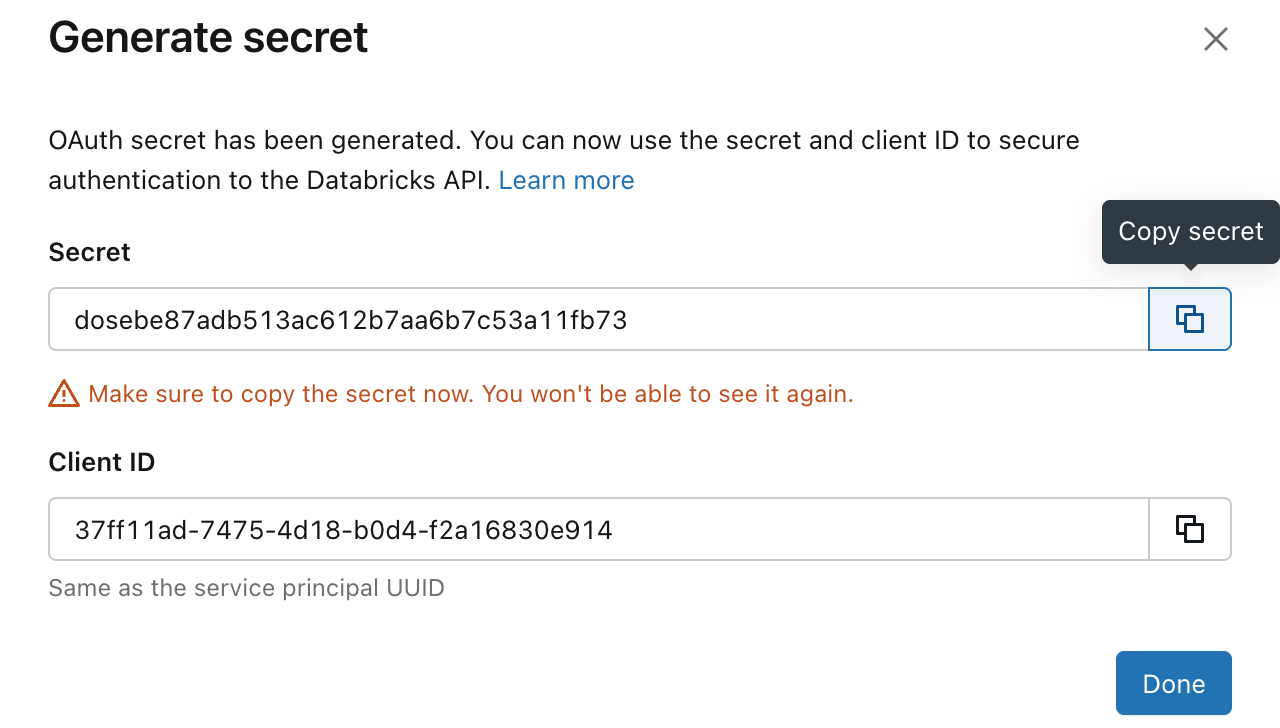

Select the newly created service principal and click Generate Secret.

-

Copy the Client ID and Secret from the pop-up and store them in the Values Reference table. The Secret cannot be viewed again after this step.

How Are Workspace Permissions Assigned?

The service principal needs access to every workspace being connected with Keebo. Repeat these steps for each workspace.

-

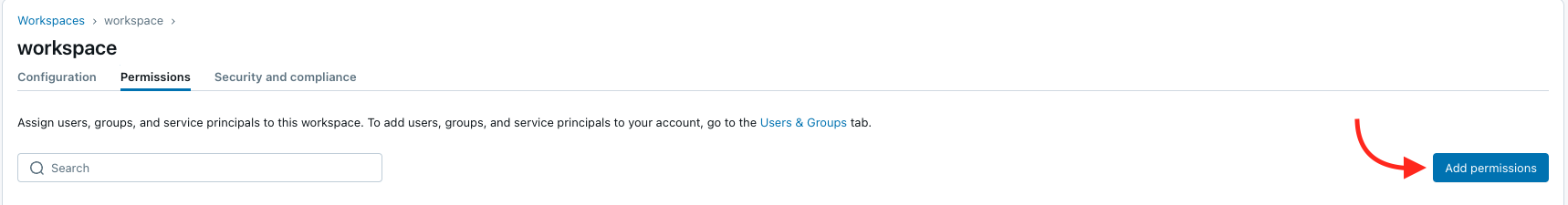

In the Account Console, click Workspaces in the sidebar.

-

Click the name of the workspace to connect.

-

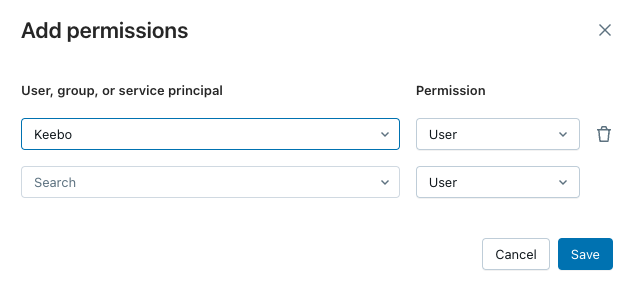

Open the Permissions tab and click Add permissions.

-

Search for and select the Keebo service principal, set the permission level to User, and click Save.

Save the workspace URL (the address bar URL for this workspace) to the Values Reference table.

Do not run GRANT ... ON CATALOG system for the Keebo service principal. Keebo uses a views-based approach instead; see How Is Keebo Granted Access to Usage Data?.

Entitlements

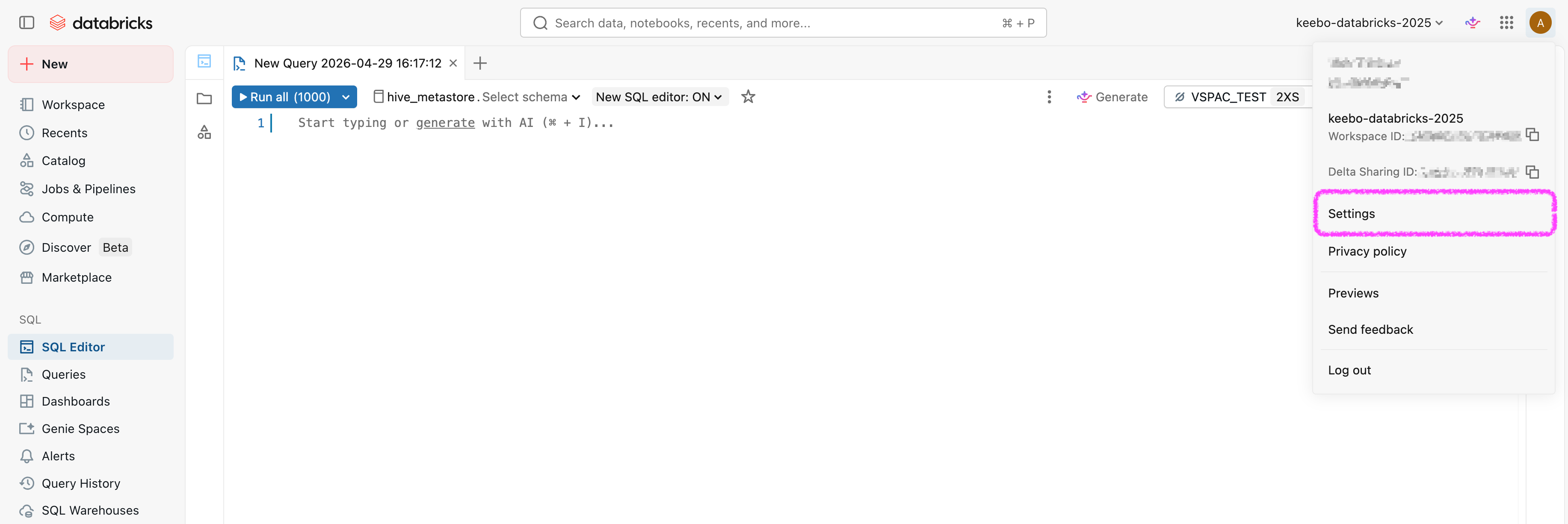

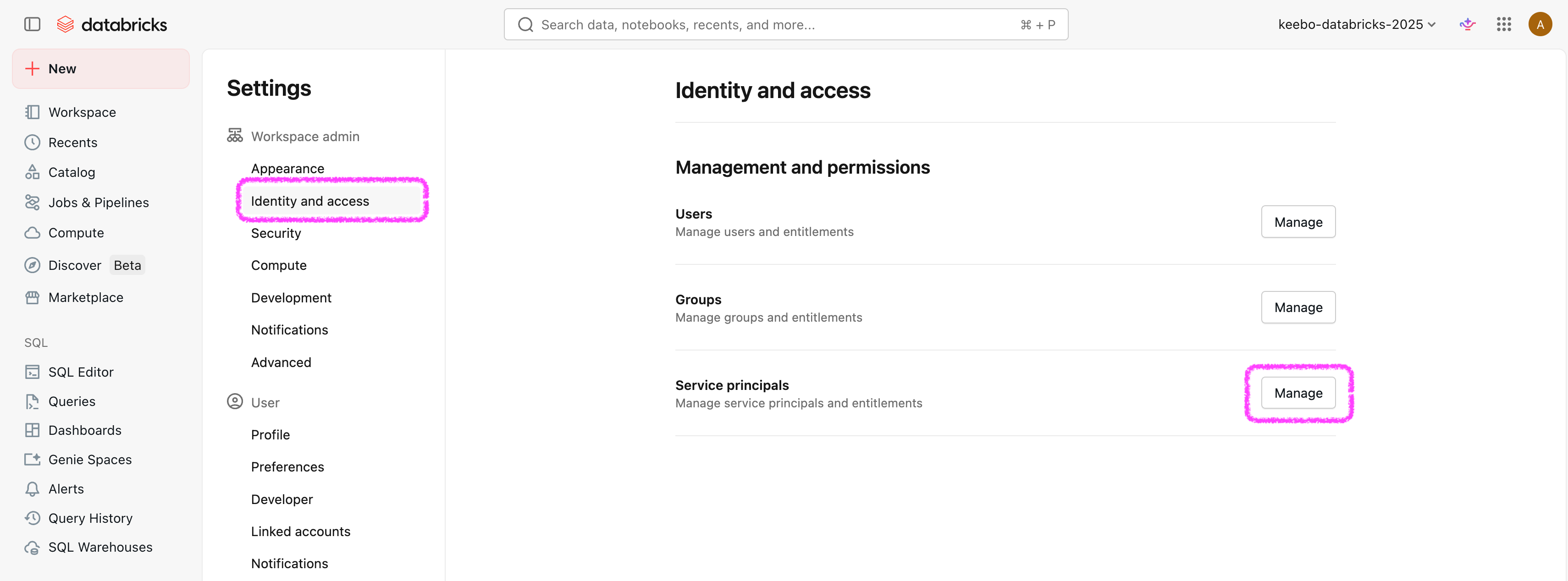

User on the workspace and CAN_MANAGE on warehouses are separate from Databricks entitlements (Workspace access, Databricks SQL access, and others). Depending on group defaults and workspace configuration, the Keebo service principal may need those entitlements explicitly granted for API access. In the workspace: user menu Settings → Identity and access → Service principals → select the principal; entitlements are listed there.

If a control is greyed out, it is inherited from a group (commonly the workspace users group). Change entitlements on that group or see Databricks entitlements.

If a control is greyed out, it is inherited from a group (commonly the workspace users group). Change entitlements on that group or see Databricks entitlements.

How Are System Schemas Enabled?

The views that Keebo uses read from the system catalog. The following system schemas must be enabled: billing, compute, and query.

- Find the Metastore ID of the workspace (for example, run

databricks metastores listif using the CLI). - Verify that the system schemas are enabled:

databricks system-schemas list <metastore-id>

The output should show billing, compute, and query with state ENABLE_COMPLETED. If not, enable them:

databricks system-schemas enable <metastore-id> compute

databricks system-schemas enable <metastore-id> billing

databricks system-schemas enable <metastore-id> query

If these commands do not complete successfully, contact Databricks support.

How Is Keebo Granted Access to Usage Data?

Keebo reads usage data through views in a catalog and schema that the organization controls, not by direct access to the system catalog. The Keebo app generates the exact SQL and verifies the setup is correct — this is completed in Phase 2. The SQL script will:

- Create the catalog and schema (if needed)

- Create or replace the four views that read from system tables (warehouse events, warehouses, billable usage, query history)

- Grant the Keebo service principal SELECT on those views only

- If the Keebo service principal previously had direct access to the

systemcatalog, include REVOKE statements to remove that access

The catalog name defaults to keebo. Record it in the Values Reference table.

How Is the Export Volume Created?

Batch workload export writes Parquet files under a fixed Unity Catalog path: catalog keebo, schema kwo, volume export (keebo.kwo.export). This is separate from the catalog and schema configured for usage views. If the volume is not created before export jobs run, they will fail with [UC_VOLUME_NOT_FOUND] Volume keebo.kwo.export does not exist.

Step 1 — Create the keebo catalog

keebo as your views catalog?If you configured keebo as the catalog for your usage views, the catalog already exists — skip to Step 2.

How you create the catalog depends on your account's Unity Catalog storage configuration:

- Accounts with a metastore-level managed storage location — run

CREATE CATALOG IF NOT EXISTS keebo;in the SQL Editor. - Accounts using Default Storage (newer auto-enabled workspaces) —

CREATE CATALOGwithout a storage path will fail with anINVALID_STATEerror. Either:- Use the Databricks UI: Go to Data > Catalogs > Create catalog and name it

keebo. The UI handles storage assignment automatically. - Specify a managed location in SQL:

The storage URI depends on your cloud (e.g.

CREATE CATALOG keebo

MANAGED LOCATION '<your-cloud-storage-uri>';s3://bucket/pathon AWS,abfss://...on Azure,gs://...on GCP). The location must be within an external location that you have theCREATE MANAGED STORAGEprivilege on.

- Use the Databricks UI: Go to Data > Catalogs > Create catalog and name it

See the Databricks documentation on managed storage locations for details.

Step 2 — Create the schema, volume, and grants

Once the keebo catalog exists, run the following in the Databricks SQL Editor (typically as a Metastore Admin or equivalent):

CREATE SCHEMA IF NOT EXISTS keebo.kwo

COMMENT 'Keebo workload export';

CREATE VOLUME IF NOT EXISTS keebo.kwo.export

COMMENT 'Keebo export volume (managed volume)';

Grant the Keebo service principal permission to read and write the volume. Replace <PRINCIPAL> with the principal identifier required in your environment (see Unity Catalog privileges for your cloud):

GRANT USE CATALOG ON CATALOG keebo TO `<PRINCIPAL>`;

GRANT USE SCHEMA ON SCHEMA keebo.kwo TO `<PRINCIPAL>`;

GRANT READ VOLUME, WRITE VOLUME ON VOLUME keebo.kwo.export TO `<PRINCIPAL>`;

Verify

Run the following in the Databricks SQL Editor:

SHOW VOLUMES IN SCHEMA keebo.kwo;

You should see export in the results. Save the export volume path (keebo.kwo.export) to the Values Reference table.

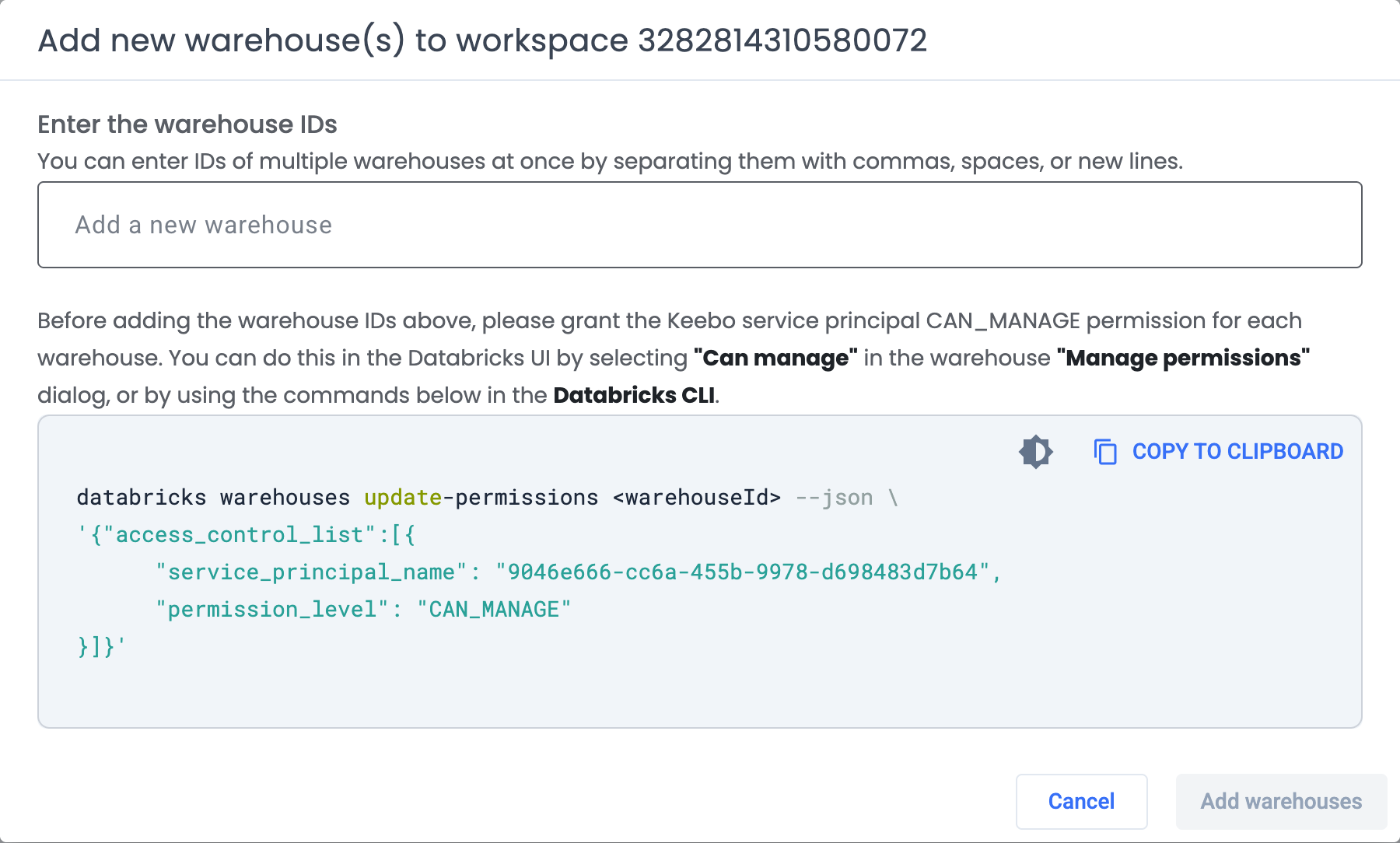

How Are Warehouse Permissions Granted?

For each warehouse to be optimized, the Keebo service principal must be granted CAN_MANAGE permission. This allows Keebo to resize the warehouse and change its configuration. If warehouse APIs fail after this step, also verify entitlements on the service principal.

Add each warehouse ID to the Values Reference table before granting permissions.

Using the Databricks UI

- In the Databricks workspace, open SQL Warehouses from the left navigation.

- Click the three-dot menu next to the warehouse name and open Permissions.

- Search for the Keebo service principal and set its permission to Can Manage.

- Save. Repeat for every warehouse to optimize.

Using the CLI

Replace <clientId> with the service principal Client ID and <warehouseId> with the warehouse ID:

databricks warehouses update-permissions <warehouseId> --json '{

"access_control_list": [{

"service_principal_name": "<clientId>",

"permission_level": "CAN_MANAGE"

}]

}'

How Is Network Access Configured?

If the Databricks account or workspace has IP access lists enabled, Keebo's egress IPs must be added to the allow list. If IP access lists are not enabled, skip this step.

IP access lists require the Enterprise pricing tier in Databricks.

The Keebo egress IP addresses are listed in Databricks Security Setup.

Option 1: Account Console (UI)

- Log in to the Databricks Account Console and go to Security > Account console IP access list.

- Enable the IP access list feature if it is not already on.

- Click Add rule, select ALLOW, provide a label (e.g. "Keebo"), and enter the Keebo IP addresses.

- Save the rule. Changes may take a few minutes to take effect.

See the Databricks IP access list documentation for details.

Option 2: Workspace IP Access Lists (CLI)

# Enable IP access lists for the workspace (if not already enabled)

databricks workspace-conf set-status --json '{"enableIpAccessLists": "true"}'

# Create an allow list entry for Keebo

databricks ip-access-lists create --json '{

"label": "Keebo",

"list_type": "ALLOW",

"ip_addresses": [

"34.123.209.159/32",

"34.134.199.98/32",

"34.136.192.189/32",

"34.123.121.251/32",

"35.226.95.64/32",

"35.232.243.181/32",

"34.41.176.165/32",

"35.224.13.139/32",

"34.29.108.17/32",

"34.30.123.135/32"

]

}'

Once any IP address is added to an allow list, all IP addresses not on the list are blocked. Ensure your own network is included before enabling this feature to avoid losing access.

See the Databricks workspace IP access list documentation for details.

Phase 2 — Keebo Onboarding Wizard

Once Phase 1 is complete and all values have been collected from the Values Reference table, sign in to the Keebo portal and complete the onboarding wizard. Contact the Keebo customer success team if no one at the organization has accessed the portal before.

The wizard walks through four steps, each with inline validation to confirm that the configured Databricks objects are accessible.

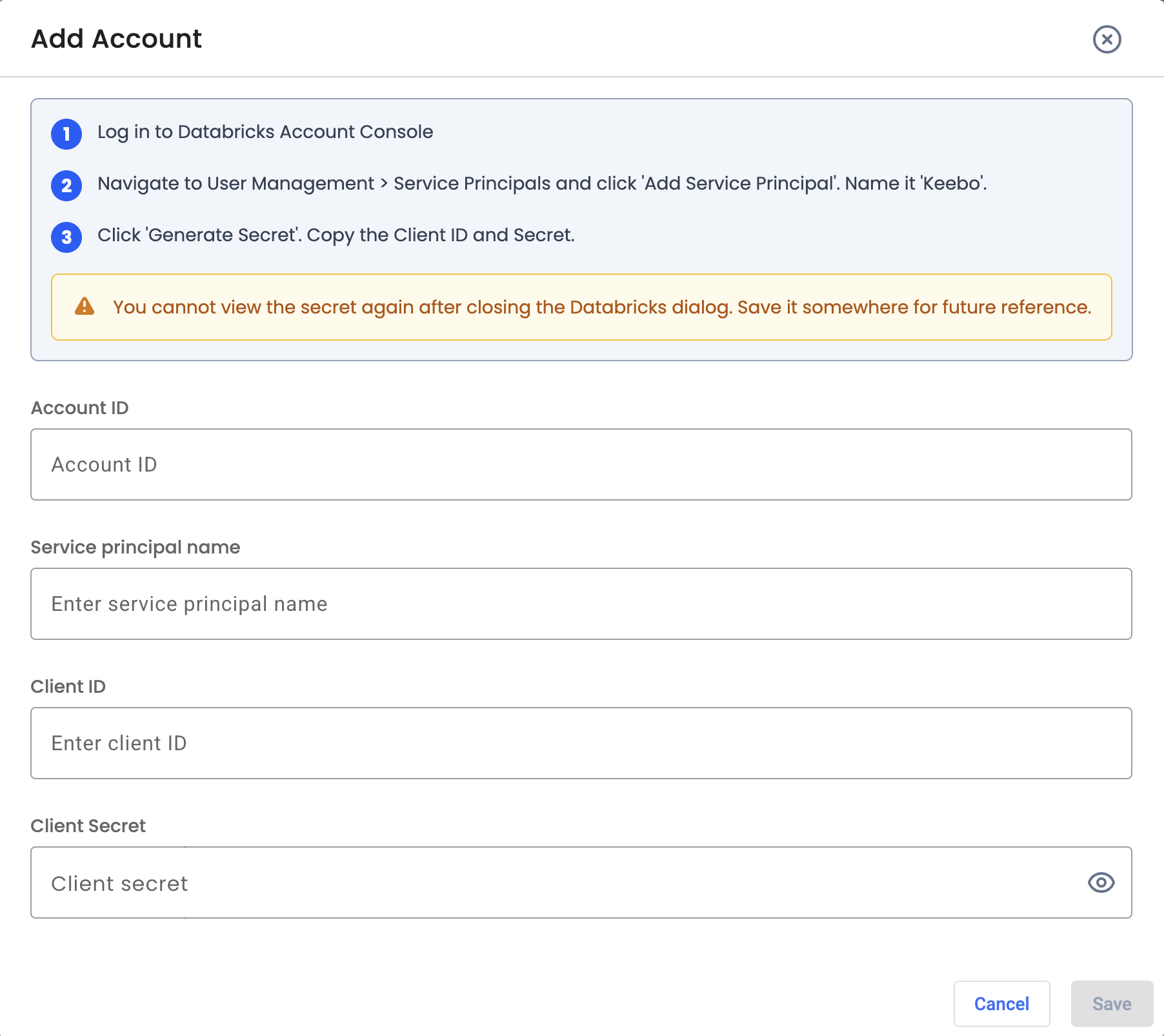

Step 1 — Service Principal

Enter the credentials collected during Phase 1:

- Account ID — from the Databricks Account Console URL

- Service principal name — the name given to the Keebo service principal (e.g. "Keebo")

- Client ID — from the service principal detail page

- Client Secret — generated during service principal setup

The wizard validates the credentials against the Databricks Account Console before proceeding.

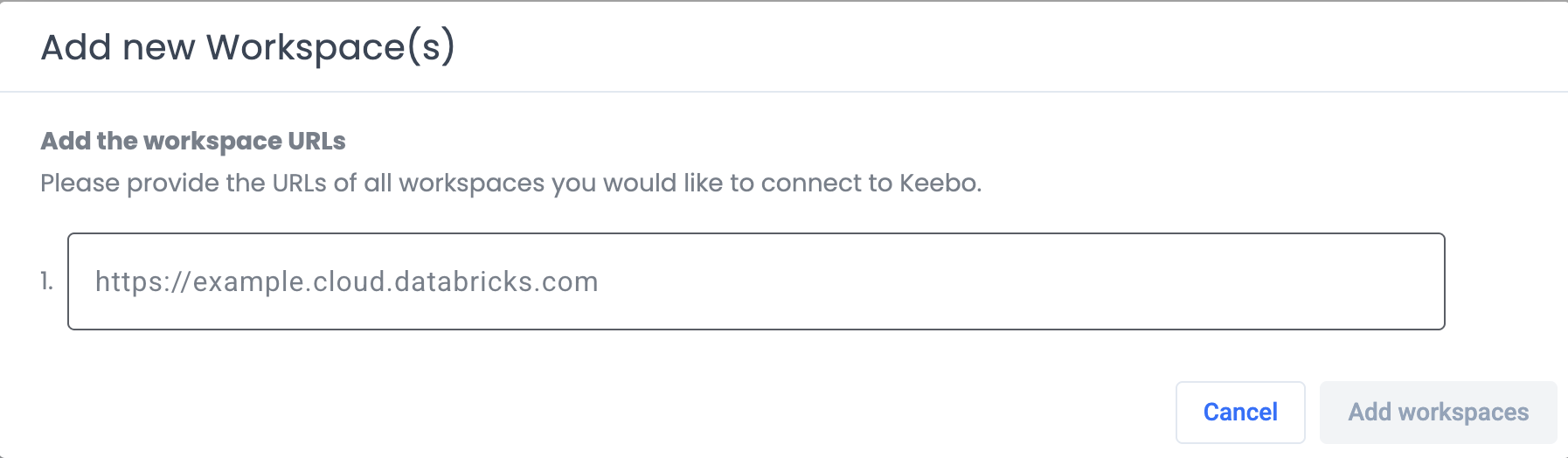

Step 2 — Workspaces

Enter the workspace URL for each workspace to connect. The workspace URL is the address bar URL when logged into the Databricks workspace (e.g. https://xyz.cloud.databricks.com).

Multiple workspaces can be added. The wizard validates that the service principal has User access on each workspace entered.

Step 3 — Warehouses

Enter the warehouse ID for each SQL warehouse to optimize. The warehouse ID is displayed next to the warehouse name in SQL Warehouses in the Databricks left navigation.

The wizard validates that the service principal has CAN_MANAGE on each warehouse entered.

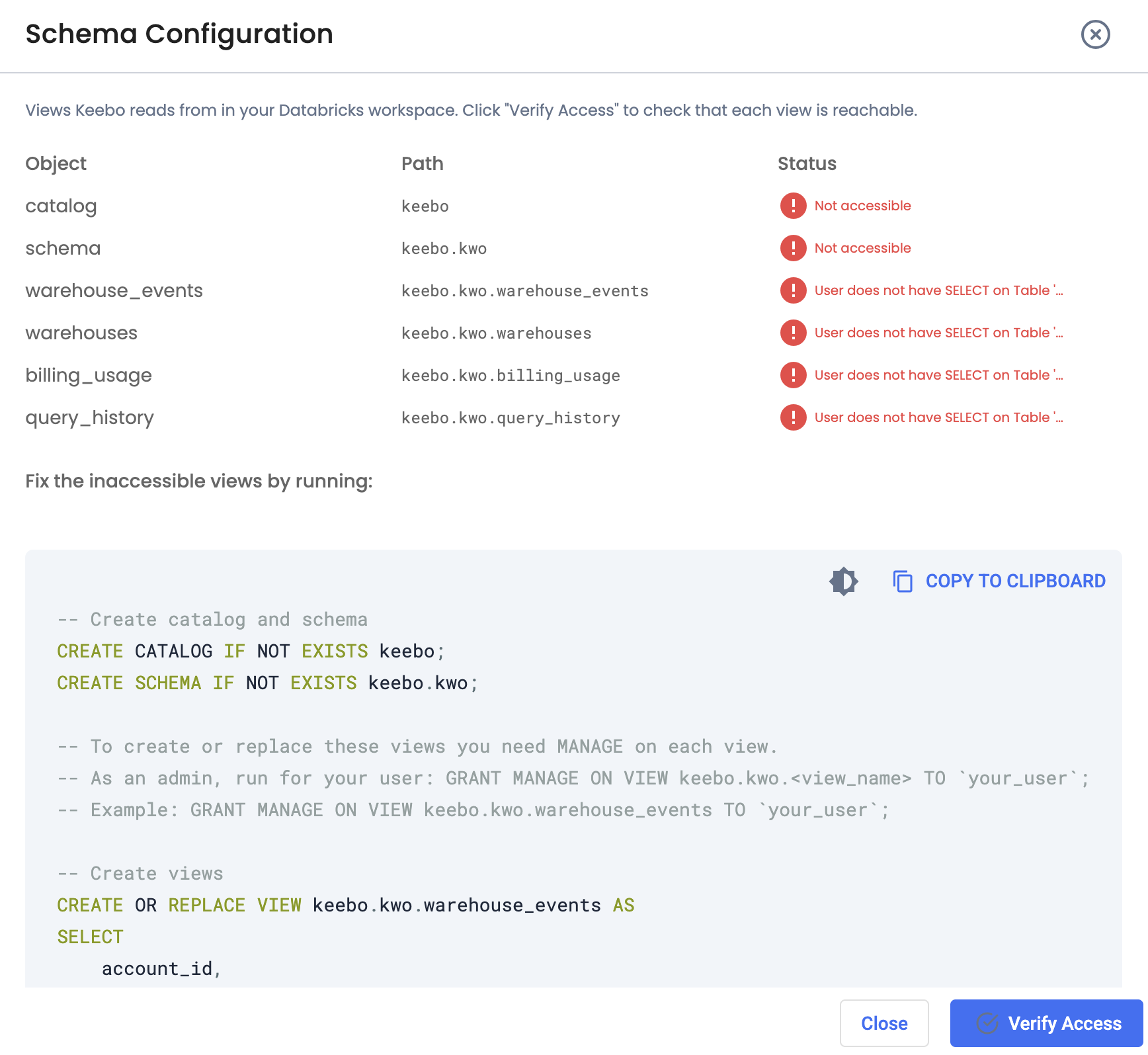

Step 4 — Schema Configuration

The wizard checks whether the required catalog, schema, four usage views, and the export volume (keebo.kwo.export) exist and are accessible to the Keebo service principal.

If anything is missing or not accessible, the wizard displays the exact SQL to run in the Databricks SQL Editor. Copy the script, run it in the workspace being connected, and click Verify Access again until all checks pass.

Once all checks pass — catalog, schema, all four views, and the export volume — the workspace is fully connected and Warehouse Optimization begins optimizing the selected warehouses.